A.I. AND MUSIC CREATION

TL;DR - The past : musicians learned how to play instruments and how to compose songs - recording was done in studios by others and was very expensive; 1990s onwards : digital technology changed the paradigm - musicians still needed to know how to write songs, but playing instruments less important - instead, audio engineering had to be learned in order to record songs in home studios. Today, AI platforms like Suno mean that you can make music by just typing a few prompts - no skills at all needed. This is not a good thing.

THE PRE-DIGITAL AGE

Back in the old days if you wanted to make music you had to do two things.

First, you had to learn to play a musical instrument. It didn’t have to be perfect, you didn’t need to be a virtuoso as the punk movement showed us, but you did need to have some sort of basic competence on a guitar, bass, or something.

The second thing you needed to do was learn how to write songs. You could do this by studying other people’s music, listening to your favourite records and working out basic song structures. Or, you could be experimental and just write any kind of music you wanted to, once you had down the basics of playing.

Next came the recording. Back in the old days, you recorded in a recording studio. This was a rare event, and you didn’t really need to be involved in the process beyond turning up and playing your song. A dedicated recording engineer and producer would do everything for you, all of the technical stuff, and at the end of the day, give you your wonderful recording on a tape.

THE DIGITAL AGE

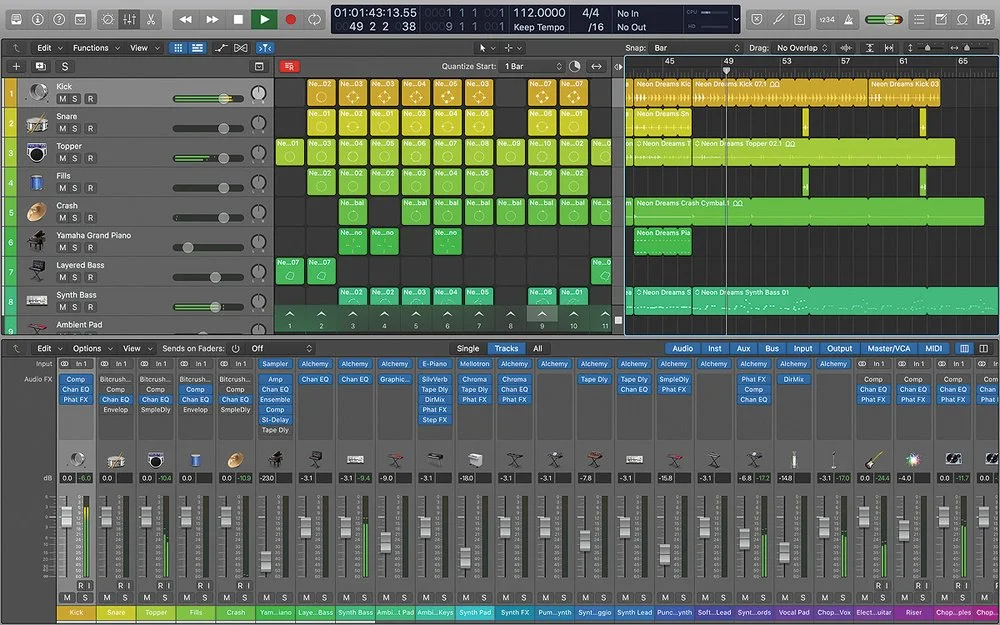

Then, with the advent of computers, we started to move into the realm of home recording : fast forward until today, and it is now possible within the confines of your own bedroom to write, record and release a song with superb audio quality. What makes this possible is the software revolution that brought us the digital audio workstation, or DAW for short. This device allows you to plug real instruments like guitars into your computer and record it digitally, or to use various software instruments, samples of instruments and voices to build songs.

The upshot of this has been a democratisation of music making, in a sense. You actually no longer need to know how to play an instrument. As long as you can tap notes out on a virtual keyboard you can make music. You can also build music up from various loops and samples. It’s an entirely new paradigm.

Just because you don’t need to learn to play an instrument, doesn’t make this form of music making any less valid than the old style. You still have to know how to construct a song and understand the kind of elements that sound good. You also need some intuitive sense of melody.

But there’s a new element. You are now the audio engineer and producer, too. In the past, it was the guy in the studio who did all of that for you. But now you have to learn how to use the DAW on your computer. That is quite a complex piece of software, and normally it takes a long time for you to work out how to use it properly in a professional manner in order to produce professional sounding pieces of music.

You might say that a large component of creating ‘good’ music is no longer the ability to play an instrument, but the ability to understand audio engineering, which is in some ways a lot more scientific.

THE AI AGE

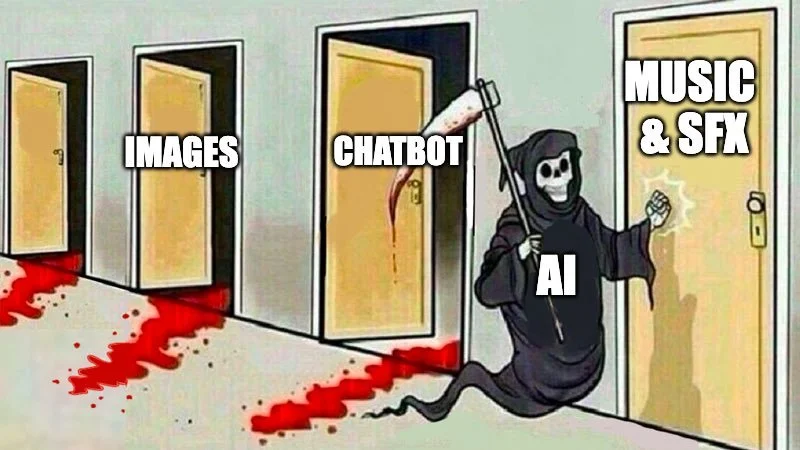

However, we have very recently entered into a new third way of making music. This is with the advent of AI. Yes, I know everybody is talking about AI these days, and I also wrote a previous post about how I think we should be embracing this, and not fearing it.

Elements of AI have already been present in the type of music that I make for quite a few years. For example in Logic Pro, the software that I use to make my music, you can already get various AI ‘session musicians’ to play along with the parts which you’ve already made. There’s an AI bass player, drummer and keyboard player. You can define the style or genre, and have further control over the complexity of their playing, and many other tweaks. In addition, if you’ve specified the chords of the song, these session players will follow along, always in key.

Is this a bad thing? Well, it depends on how you use it. I don’t need an AI bass player since I can actually play bass. I also know how to programme drums, but I sometimes use the AI rock drummer, since it produces patterns that are really hard to tell from real drums. However, I always refine the AI performances after the fact to tailor them specifically to my music and stop them from becoming generic.

And that’s the inherent danger : genericism. Somebody with no musical knowledge whatsoever could just get a chord sequence from the internet, dump it into the software and have the AI generate bass, drums and keyboards for it. OK, they’d still have to come up with a melody, the defining element of any song, and devising one over chords is probably not something just anyone can do.

A lot of people are just lazy and want instant results, and they aren’t perfectionists either, so they’re going to end up with something bland and boring, because that’s what AI in music is geared to - the tedious common-place stuff of most hits that people both want to both listen to, and emulate.

You can see this ‘lowest common denominator’ factor when it comes to ‘loops,’ another aid to composition that has been around for a long time. It isn’t AI, but stills plays to the quotidian.

Loops are sort, pre-made measures of music (drums, vocals, bass, keys, etc) that can be used as building blocks in compositions. In Apple’s Logic Pro there are thousands of them on hand, and they are ‘royalty free,’ which means that you can legally use them in your commercial releases. Other companies such as Splice or Arcade provide similar ready-made chunks of music that can be thrown into a song.

What’s wrong with this? Again, genericism. Let me relate an early experience I had with Apple’s Logic Pro when I was new to it. The loops were a fun novelty, so I quickly slapped together a few cool sounding parts and crafted a song, which I later released. The main part was a sinister-sounding riff played on a piano. Imagine my horror when a few months later I hear the exact same riff on the soundtrack to a BBC TV show!

That’s the problem in a nutshell - thousands of other people are going to be using the same loops, which are aimed at the kind of music most people like, thereby further shoehorning your efforts into something that is mainstream and samey. All of this takes you further away from the ability to come up with groundbreaking experimental compositions, and from true art. But then again, if you just want to make money, then you’re probably going to be happy to pump out this kind of stuff.

Now, there is nothing inherently wrong with using samples and loops to build compositions in and of itself. There is the well-respected art form of the collage, a cobbling together of existing items to construct something new. Back in the 90s groundbreaking musicians like DJ Shadow and Amon Tobin used sampling technology to ‘steal’ riffs, drum beats, vocal parts etc from old vinyl and then cut, paste and transform them into something entirely new and quite wonderful. Listen to Shadow’s amazing ‘Entroducing’ album (1996) or Tobin’s ‘Bricolage’ (1997) to get an idea of this kind of thing.

I myself occasionally like to try to make a track entirely out of samples and loops as a kind of challenge and to force myself out of my usual kind of composition. However, the trick is to mutate, mangle and chop up the material, then use effects to creatively transform them into something that is both original and also unidentifiable in that the source material cannot be recognised : nobody could listen to it and say ‘oh, that’s that tired old loop from Logic Pro that everyone and their grandmother has used.’

THE LATEST AI DEVELOPMENTS

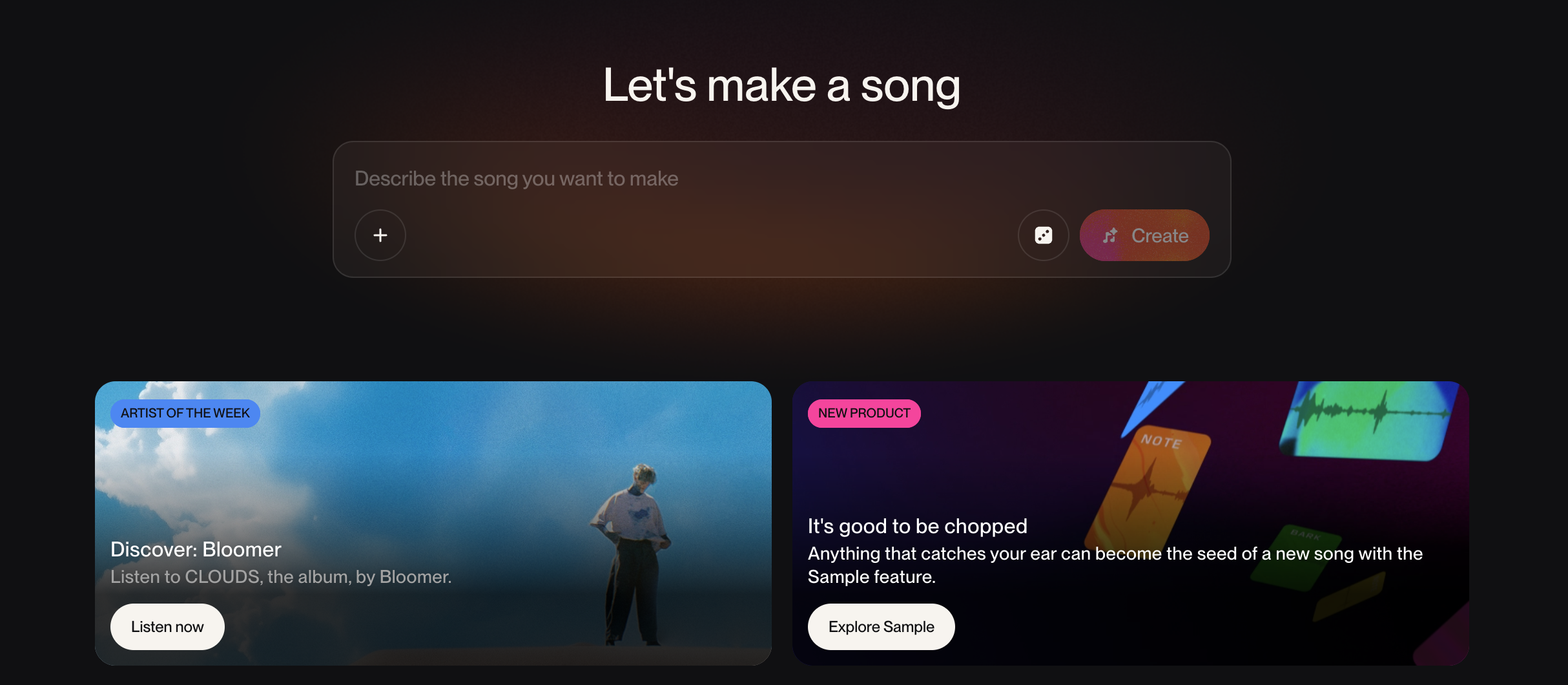

But let’s get back to the topic of AI, because in the last year or so a new threat to artistry has emerged with the advent of such companies as Suno. Here humans are taken one step further away from the creative process of making music. We’ve gone from needing to play instruments, to being able to assemble pre-made chunks of music, to being able to make songs simply from written prompts.

If you haven’t tried Suno, give it a go. There’s a free tier for you to play about with, and there’s no denying that it’s fun. All you need to do is think of the kind of song you want to create and describe it in simple text. As an example, I wrote something like ‘early 80s post-punk dark guitar, bass, drums, synth,’ and added in some random lyrics. After pressing go, it thought for a while, then spat out several difference versions based on my prompts.

Have a listen to one of them here:

I immediately noted two things about this track : one, it isn’t particulary good, artistically speaking. Two, it’s a rip-off of the Joy Division song ‘A Means to an End’ - at the 1:07 mark you can hear the same descending riff which characterises that particular track. I didn’t specify Joy Division in the prompt, but that’s clearly one of its references. In fact, it’s so close it might be actionable in a court of law if you tried to release it commercially. And this isn’t surprising at all, really, given that Suno admits that it’s AI is trained on the millions of songs available on various streaming platforms, which in itself is highly dodgy since the artists it copied from and subsequently mimics, don’t get paid for this. (I believe Suno had to pay a large amount of cash to Warner Music Group for this very reason).

Legal matters aside, this new way of making music is bad simply because by its very nature it’s not going to come up with anything new and revolutionary : yes, all ‘real’ musicians are influenced by their own listening and to a certain extent are subconsciously rehashing elements of what went before, but they still have the agency to make something entirely new and exciting from this - they can push boundaries, which AI cannot do. AI is bound by what it’s been trained on and also it’s algorithmically programmed to stick to the lowest common denominator - making something which sounds like something else that is successful. No exciting musical breakthroughs are possible.

Now there’s still scope for services like Suno to be useful to musicians. You could use it as a starting off point to generate ideas which you otherwise wouldn’t have come up with. You could take these ideas and rework them, adding your own individual stamp to them.

But that’s not what most people are going to do. The selling point is making music instantly with zero effort. Already millions of copycat songs are being churned out and released, and platforms such as Spotify are doing nothing to alert people to the fact that a song might be entirely AI generated.

The point, of course, for Suno, the ‘musicians’ who use Suno, and Spotify - is to make the most money with the least possible effort.

Real artists will not be threatened by this flood of garbage, which isn’t a new phenomena, just an accentuation of a trend. AI generated music is all about appealing to masses by supplying them with endless iterations of a tried and tested formula. Real artists can still make original challenging art, although they may not be able to make a living from it very easily.

If you are just a casual music fan who likes the mainstream, then you might not even realise that what you’re listening to is pure AI, but I suspect that most people would prefer to listen to actual humans.

I think a line has clearly been crossed : while a lot of recent AI tools have been genuinely helpful to musicians in the creative process, and have allowed kids to record songs in their rooms, platforms like Suno have removed all the skills previously necessary to make music. The human element has been reduced to almost nothing. This is fine for producing bland elevator Muzak, but for anything resembling the spirit of art or true self-expression, it’s an avenue that goes nowhere.

I still believe in embracing AI, but care has to be taken how it is used. There’s no stopping it now, since Pandora’s box has been opened, so it’s just a case of getting used to it. Expect a world where actual humans making music as an art form are in the minority, and your playlist on Spotify will be full of a never-ending supply of familiar-sounding tunes.

I’ve mentioned this before, but remember George Orwell’s ‘1984’ - the dystopian novel of the future written way back in 1948. In that prophetic tale, the working classes are kept ignorant and quiet by a steady stream of soporific drivel, including songs written by a computer in which the meaningless words and comfortably familiar melodies are endlessly regurgitated.

That’s exactly where we’re at now, ladies and gentlemen.